Technology and Social Change

Logical Argumentation: Building Strong Arguments and Avoiding Common Errors

Introduction

Why This Lecture?

- Tech and development debates often sound convincing

- Many rely on flawed reasoning or missing evidence

Two core skills for this class and beyond:

- Build clear, evidence-based arguments

- Detect flawed reasoning in others’ claims

Roadmap

- Anatomy of a good argument

- Deductive vs. inductive reasoning

- Eleven logical fallacies to avoid

- Practice exercises

So What?

From persuasion to logic

- Sounding persuasive is not the same as being right

- Structure separates strong claims from noise

Next → What does a well-built argument look like?

I. Anatomy of a Good Argument

What Is an Argument?

Not a fight — a structured case

An argument is a claim supported by evidence and reasoning

Four essential components:

- Claim: states your position

- Evidence: supports the claim with facts or data

- Warrant: explains why evidence supports the claim

- Scope: qualifies where and when the claim holds

The Four Components

How they fit together

State your position

Facts, data, studies

Why it supports claim

Where and when

Weak Argument

What’s wrong here?

“AI is dangerous because it will take all our jobs.”

- ✗ Vague claim — which jobs? Where?

- ✗ No evidence cited

- ✗ No mechanism explained

- ✗ No scope or limits stated

Strong Argument

The same topic, done right

“AI-driven automation could displace 85 million jobs globally by 2025 (World Economic Forum, 2020), primarily in routine clerical and manufacturing tasks in advanced economies.”

- ✓ Specific, testable claim

- ✓ Named source with a number

- ✓ Mechanism: routine task automation

- ✓ Scope: specific job types and regions

Qualifying Your Claims

Precision builds credibility

| Overconfident | Qualified |

|---|---|

| AI will destroy all jobs | AI is likely to displace routine tasks |

| AI causes discrimination | AI can reproduce biases in training data |

| AI regulation always stifles innovation | Regulation’s effect depends on design and enforcement |

Strong writers qualify claims — weak writers hide behind absolutes.

But Wait: Isn’t “AI Will Take Your Job” More Memorable?

The tension between precision and punch

- Absolutes are sticky: “AI will destroy jobs” lands harder

- But sticky ≠ correct — audiences notice overstatement

The solution: lead sharp, then qualify immediately

- “AI is coming for routine jobs — and the data backs it up”

- Memorable and defensible

The goal is not to sound boring. It’s to sound confident and precise.

Try It: Rewrite for Punch + Precision

Boring but accurate: “Some studies suggest AI may be associated with job displacement in certain sectors under specific conditions.”

Sharp and qualified: ???

Take 30 seconds — rewrite this so it’s both memorable and defensible.

One version: “AI is already replacing call center workers and data entry clerks — and the trend is accelerating in economies that can’t retrain fast enough.”

II. Common Argument Structures

Deductive Reasoning

Start with a rule, apply it to a case

General rule: automation of routine tasks displaces workers

Specific case: AI automates clerical and manufacturing tasks

∴ Conclusion: AI will displace workers in those roles

- Why it’s powerful: if the rule holds, the conclusion must follow

- The catch: the rule itself might be wrong or incomplete

Inductive Reasoning

Specific observations → general conclusion

Patterns suggest but don’t guarantee a conclusion:

- AI hiring tools showed gender bias at Amazon (2018)

- Facial recognition misidentified darker-skinned faces more often (Buolamwini & Gebru, 2018)

- Predictive policing amplified racial disparities in the U.S.

- ∴ AI systems tend to reproduce biases in training data

- Strength: grounded in observed evidence

- Risk: new cases could disprove the pattern

Two Directions of Reasoning

Most academic arguments use both: induction to find patterns, deduction to apply them.

Deductive vs. Inductive

When to use each

| Deductive | Inductive | |

|---|---|---|

| Direction | General → Specific | Specific → General |

| Certainty | Certain (if premises true) | Probable |

| Best for | Applying theories | Building from evidence |

| Watch for | False premises | Hasty generalization |

Both are powerful — but both can go wrong in predictable ways. Next: eleven common errors.

III. Logical Fallacies

What Is a Fallacy?

- Errors in reasoning that appear persuasive

- Used intentionally or accidentally to mislead

Two goals for this section:

- Recognize fallacies in others’ arguments

- Avoid them in your own

Fallacy Families

Three categories of errors

| Evidence Errors | Reasoning Errors | Rhetorical Tricks |

|---|---|---|

| Cherry Picking | False Dilemma | Ad Hominem |

| Hasty Generalization | Strawman | Appeal to Authority |

| Correlation ≠ Causation | Slippery Slope | Appeal to Emotion |

| Ecological Fallacy | Sunk Cost |

Rhetorical Tricks

Ad Hominem

Rhetorical Trick

- Attacks the person, not the argument

- Shifts focus from evidence to character

- Watch for: insults, motive questioning, associations

Examples

- “She’s not a scientist — ignore her climate views”

- “He’s a billionaire — of course he defends AI”

What’s Wrong With This?

“Elon Musk says AI is humanity’s biggest threat. He’s a genius — that settles it.”

The fallacy: Appeal to Authority

- Uses a famous person’s opinion as evidence

- Musk is an engineer, not an AI safety researcher

- Reputation ≠ expertise on the specific topic

Appeal to Emotion

Rhetorical Trick

“Picture a five-year-old asking: ‘Mommy, will a robot take your job?’ That’s the future if we don’t act NOW.”

- Manipulates fear or pity instead of presenting evidence

- The image is vivid — but what’s the actual data?

- Watch for: dramatic stories, loaded language, urgency without facts

Evidence Errors

Cherry Picking

Evidence Error

- Selects evidence that supports a claim

- Ignores data that contradicts it

- Watch for: one-sided statistics, single studies cited

Examples

- “China’s GDP growth proves authoritarianism drives development”

- “This one study shows no climate impact”

Hasty Generalization

Evidence Error

- Draws conclusions from insufficient evidence

- Overgeneralizes from one case or small samples

- Watch for: anecdotes treated as universal proof

Examples

- “My cousin’s factory closed — free trade destroys jobs”

- “One village doesn’t use solar — renewables fail in Africa”

Ecological Fallacy

Evidence Error

- Infers individual traits from group-level data

- Group averages don’t apply to every member

- Watch for: national statistics applied to individuals

Examples

- “India’s tech sector booms, so all Indians benefit”

- “Average income is high, so all residents are wealthy”

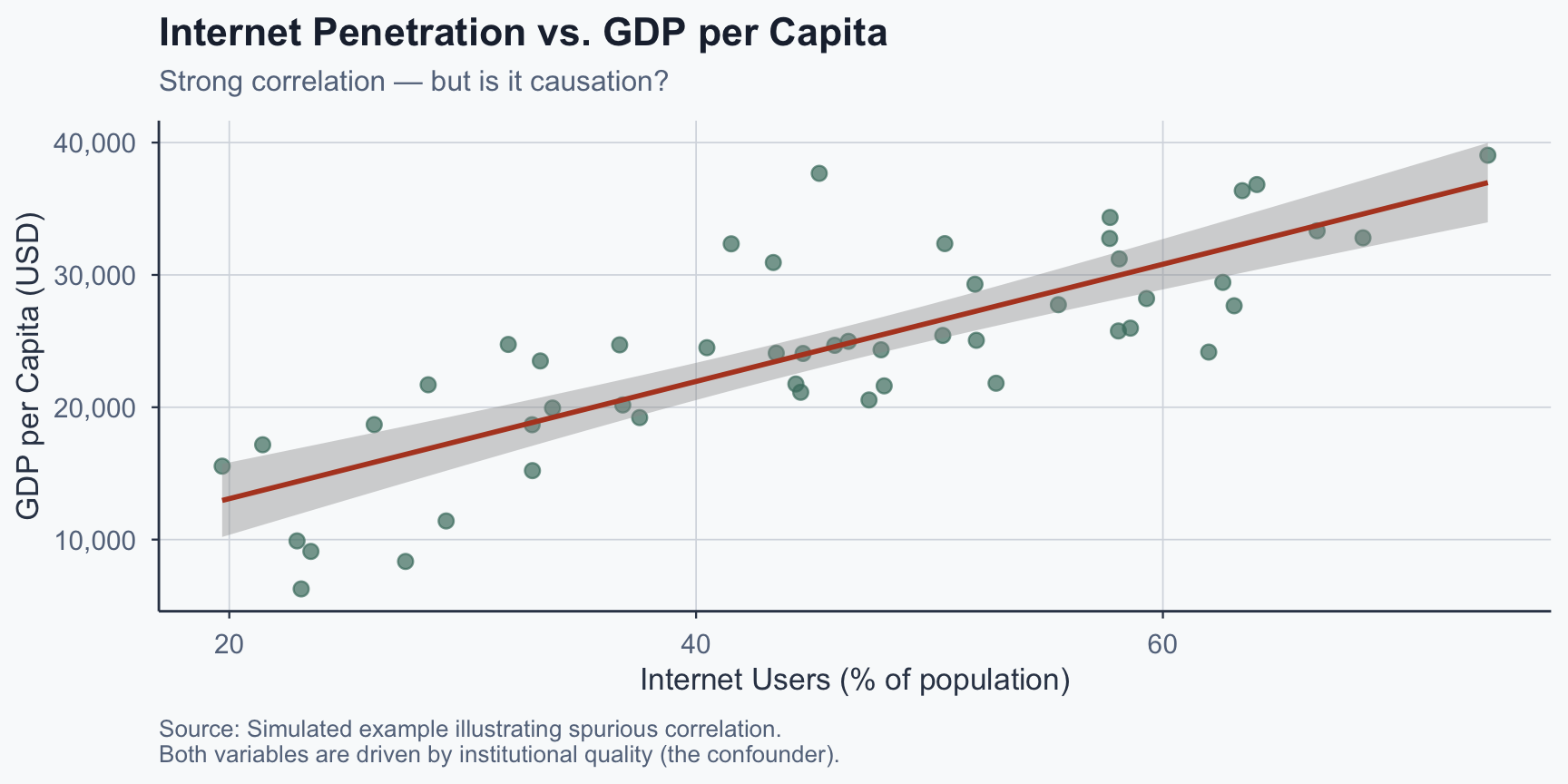

Correlation ≠ Causation

Evidence Error

- Assumes co-occurrence proves a causal link

- Ignores confounders and reversed causality

- Watch for: “X increases with Y, therefore X causes Y”

Examples

- “More internet users → higher GDP, so internet causes growth”

- “Laptop users get worse grades — ban laptops!”

How Confounders Mislead

The hidden variable problem

The confounder (wealth) drives both variables, creating a spurious correlation.

Seeing Correlation in Action

Figure 1: Correlation driven by a hidden confounder.

Reasoning Errors

What’s Wrong With This?

“Either we ban AI entirely, or we accept mass unemployment. There’s no middle ground.”

The fallacy: False Dilemma

- Presents only two options when more exist

- What about regulation, retraining, UBI, gradual adoption?

- Watch for: “either… or” framing that hides alternatives

Strawman

Reasoning Error — A real debate example

What was actually said:

“We should require AI companies to disclose their training data sources.”

The strawman version:

“They want to shut down AI research and hand control of technology to bureaucrats!”

- Distorts the original to make it easier to attack

- Watch for: extreme restatements, words never said

Slippery Slope

Reasoning Error

- Assumes one action leads to extreme outcomes

- No evidence for the claimed causal chain

- Watch for: “if we allow X, then Y, then Z…”

Examples

- “Regulate AI today → stifle all innovation permanently”

- “Allow gene editing for disease → designer babies next”

Sunk Cost Fallacy

Reasoning Error

- Past investments drive decisions, not future benefits

- Justifies continuing a failing endeavor

- Watch for: “we’ve already spent too much to stop”

Examples

- “We spent $2B on this dam — we can’t stop now”

- “Three years in this startup — I must keep going”

In Practice, Fallacies Overlap

Real arguments are messier than a taxonomy

“Africa received billions in aid and is still poor. Aid is a failure.”

- Hasty Generalization: one continent, dozens of different outcomes

- Cherry Picking: ignores countries where aid worked

- Ecological Fallacy: “Africa” is not a single case

When diagnosing an argument, don’t stop at the first fallacy you spot — look for layers.

IV. Practice

Exercise 1: Spot the Fallacy

Identify the error in each statement

- “Bill Gates says nuclear solves climate change. Case closed.”

→ Appeal to Authority — rests on reputation, not evidence

- “Africa got billions in aid and is still poor. Aid fails.”

→ Hasty Generalization — ignores variation across countries

- “Let tech companies self-regulate → they’ll control governments.”

→ Slippery Slope — no evidence for the causal chain

Exercise 2: Fix the Argument

Rewrite to make it credible and qualified

Weak: “Social media is destroying democracy.”

Possible fix: “Allcott & Gentzkow (2017) found fake news on Facebook increased polarization in the 2016 U.S. election. Algorithmic amplification can undermine informed participation, especially where media literacy is low.”

Exercise 2 (continued)

Weak: “The Green Revolution proved technology solves hunger.”

What’s wrong: treats one case as universal proof (hasty generalization), ignores environmental costs and unequal access (cherry picking), removes all scope.

Possible fix: “The Green Revolution increased cereal yields 2–3x in South and Southeast Asia between 1965–1985 (Evenson & Gollin, 2003), but gains were concentrated among farmers who could afford inputs, and environmental costs were significant.”

Exercise 3: Build an Argument

Choose one claim (5 minutes to write)

Pick one:

- “AI will widen inequality between rich and poor countries”

- “Universal basic income beats retraining for automation”

- “Open-source tech accelerates development more than proprietary”

Exercise 3: Template

Fill in each box

| Component | Your argument |

|---|---|

| Claim | ___ (your position in one sentence) |

| Evidence | ___ (one fact, study, or case) |

| Warrant | ___ (why this evidence supports the claim) |

| Scope | ___ (where does it hold? what are the limits?) |

Exercise 4: Debate Swap

Test your argument against a partner (10 minutes)

3 min — read your partner’s argument silently and note:

- Any fallacies in their reasoning?

- Missing evidence or unwarranted leaps?

- Where could the scope be tighter?

4 min — exchange feedback (2 min each)

3 min — revise your argument, then one pair presents

So What?

From exercises to everyday thinking

- These are not just classroom skills

- Every policy debate, news article, and pitch uses arguments

Your challenge → spot one fallacy in the wild this week

Conclusion

Key Takeaways

- Strong arguments have claim, evidence, warrant, and scope

- Deductive applies principles; inductive builds from evidence

- Qualifying claims makes them stronger, not weaker

- Fallacies are reasoning errors, not necessarily lies

- They cluster into evidence, reasoning, and rhetorical errors

- The best way to improve: build, break, rebuild

Popescu (TEC) Technology & Social Change — Logical Argumentation